Expectations for Next-Generation Internet Protocols

There is occasional talk of “after IPv4 comes IPv6, and then IPv8?” Yet no formal specification for IPv8 exists. IPv4’s version field is 4 bits wide, accommodating values 0–15. IPv5 was already claimed in 1979 by the Internet Stream Protocol (ST), and IPv6 deliberately chose to be incompatible with IPv4. Proposals have surfaced for IPv7 and beyond, but none have been standardized by the IETF.

So what is actually being researched and standardized as a “next-generation protocol”? How do these approaches solve the problems IPv4 brought? This article aims to lay out the reality.

IPv4 is still in service, but problems are mounting

First, let’s review the current state. IANA’s unallocated IPv4 addresses ran out in 2011. In practice, though, NAT, CGNAT, CDN, proxies, and the address transfer market have kept things running well enough.

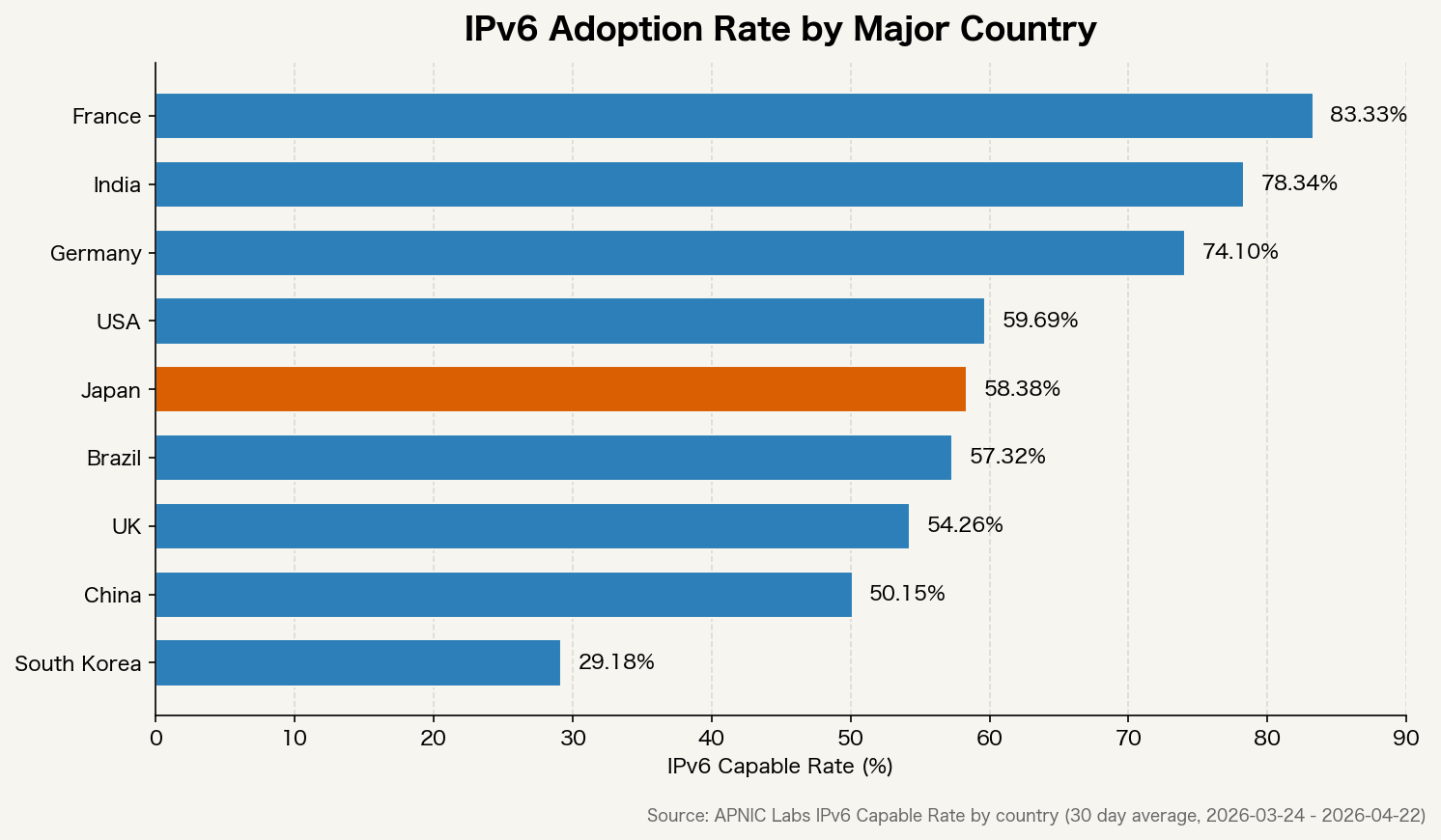

IPv6 adoption varies widely by country.

France and India have reached 70–90%, while Japan and South Korea remain below 50%. The slow adoption of IPv6 is not a standards problem — it’s that “NAT gets us by for now,” so migration never becomes a priority.

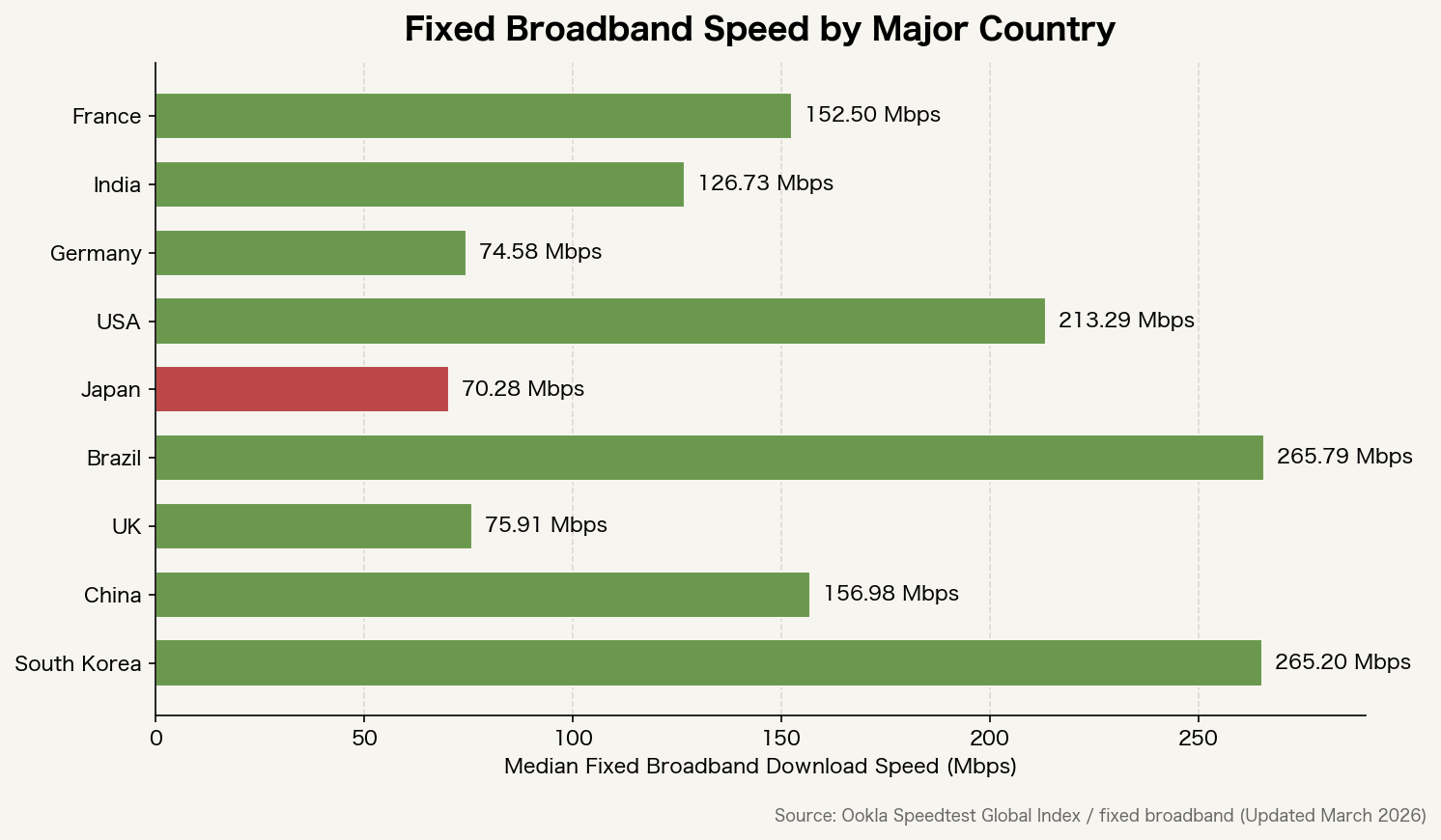

Fixed-line speeds also vary considerably by country.

The main driver of speed differences is infrastructure investment, but the extra layers of NAT and proxies do stack up latency. The more workarounds IPv4 accumulates, the less simple the routing path becomes.

Why IPv6 chose a “clean break” — and the cost

IPv6 abandoned backward compatibility with IPv4. This was a deliberate choice. Beyond extending address length from 32 to 128 bits, it redesigned header structures and aimed to restore end-to-end connectivity without relying on NAT.

But this “clean break” created migration friction. The dual-stack period — running IPv4 and IPv6 in parallel — dragged on, requiring double management of monitoring, firewalls, log analysis, and allowlists. Costs rose while visible benefits to end users remained slim. “No compelling reason to do it now” was easy to say.

This lesson sparked the question: could IPv4 be extended while preserving compatibility? That thinking led to SRv6 and various transition technologies.

SRv6: extending IPv6 to encapsulate IPv4 as well

SRv6 (Segment Routing over IPv6) uses IPv6 extension headers to explicitly specify packet routing paths. It is standardized as RFC 8986.

The key is that IPv4 packets can be encapsulated inside SRv6 for forwarding — meaning IPv4 traffic can ride on top of IPv6 routing logic. It approximates an “IPv4 superset” concept as an IPv6 extension.

SRv6 addresses several challenges:

- Route control without MPLS labels (reduces label-stack complexity)

- Fine-grained traffic engineering per route

- Unified routing across cloud and carrier network boundaries

- A single forwarding plane for mixed IPv4/IPv6 environments

NTT, China Telecom, Alibaba, and others are deploying it commercially, particularly for large-scale datacenter interconnects and 5G core networks.

SCION: redesigning routing itself

While SRv6 extends IPv6, SCION (Scalability, Control, and Isolation On Next-generation networks) aims at a more fundamental redesign. Led by ETH Zürich, it was presented at IEEE Security & Privacy 2011.

SCION’s core idea is giving the sender control over routing paths. Today’s internet uses BGP (Border Gateway Protocol) to determine routes, and senders have no control over which path their packets take. With SCION, senders can explicitly specify routes.

This enables:

- Prevention of arbitrary route changes by ISPs or governments (route hijacking defense)

- Sender-chosen paths based on latency, bandwidth, and reliability

- Failures localized to specific ASes (autonomous systems)

- Built-in authentication in the architecture, making spoofing harder

Switzerland’s government and financial network “SSFN” (Swiss Secure Finance Network) runs it in production. It can also operate as an overlay on top of IPv4/IPv6, allowing coexistence with existing infrastructure.

NDN: routing by content name, not IP address

NDN (Named Data Networking) proposes routing packets by content name rather than address. It is one of the Future Internet Architecture projects funded by the NSF (National Science Foundation).

Today’s internet is designed around “which host to send to.” NDN centers on “what to retrieve.” Content is named, and routing is done by that name.

This enables:

- In-network caching of identical content — CDN-like functionality at the infrastructure layer

- Integrity bound to names, making tampering detectable

- Natural handover in mobile environments without source-address dependency

However, compatibility with existing IP infrastructure is low, and near-term adoption prospects are unclear. Partial adoption is progressing in IoT and edge computing.

QUIC/HTTP3: absorbing IP version differences at a higher layer

Rather than changing the architecture, another approach absorbs IP version differences at a higher layer. QUIC (RFC 9000) exemplifies this.

QUIC runs on top of UDP and does not directly use the IP address and port pair as a connection identifier. Instead, it uses a connection-specific ID, so connections persist even when the IP address changes.

This gives the upper layers the same quality of communication over both IPv4 and IPv6. HTTP/3 runs on top of QUIC. Most major browsers and servers now support it.

How far has “IPv4 backward compatibility” actually been realized?

The closest practical realization of “IPv4 backward compatibility” is the combination of SRv6 and transition technologies like MAP-T.

MAP-T (Mapping of Address and Port using Translation, RFC 7599) forwards IPv4 packets through an IPv6 network and converts them back to IPv4 at the exit. Endpoints can stay on IPv4 while the backbone migrates to IPv6.

Combining these technologies creates a working configuration where:

- End users keep using IPv4

- The core network is designed on IPv6

- Route control is unified under SRv6

A new version number called “IPv8” is unnecessary — existing standards in combination can reach a similar goal.

What is actually happening now

Beyond research and standards, here is what is actually in motion today:

- 5G SA (Standalone): Core network design is IPv6-native. Built into 3GPP standards

- SRv6 commercial deployment: China Telecom, NTT, Softbank, and others have adopted it for domestic backbones

- SCION in production: Running on the Swiss financial network (SSFN)

- Apple App Store review: Requires verified operation in IPv6-only environments, forcing app-side compliance

- Cloudflare / Google: IPv6 traffic share continues to rise yearly; absorbing IPv4/IPv6 at the edge is now standard

The “next version” of IP won’t appear as a single new standard. It will be these technologies gradually replacing each layer.

What can be done now is to slowly reduce dependence on IP addresses: stop using fixed-IP allowlists, move to certificate- and identity-based authentication, use DNS properly, and leverage CDN and edge. That preparation works regardless of which next-generation protocol arrives.

Personal take: extending IPv4 to 8 octets would have been better

Let me add a personal view at the end.

Seeing that nearly 30 years have passed without meaningful migration since IPv6 chose a “clean break,” I find myself wondering if the design direction was simply wrong.

What I think would have been ideal is extending IPv4’s address notation to 8 octets.

255.255.255.255.255.255.255.255In other words, stretching today’s x.x.x.x (32-bit) to x.x.x.x.x.x.x.x (64-bit).

Address space expands dramatically

32-bit IPv4 provides about 4.3 billion addresses. 64-bit gives roughly 18.4 quintillion addresses (2⁴). Even with 35 billion IoT devices, that’s more than the number of grains of sand on Earth — no need to keep NAT alive.

Backward compatibility is easier to maintain

Treating existing IPv4 addresses as 0.0.0.0.x.x.x.x means current IPv4 packets function as a subset of the new protocol. Routers could forward addresses where the upper 4 octets are 0.0.0.0 as IPv4-compatible. The turbulent dual-stack period could have been dramatically shortened.

Human-readable notation is preserved

IPv6’s 2001:0db8:85a3:0000:0000:8a2e:0370:7334 is awkward in field troubleshooting, log review, and firewall rule writing. The 8-octet notation would be readable by anyone familiar with IPv4.

# Current IPv4

192.168.1.100

# Extended 8-octet proposal

0.0.0.0.192.168.1.100 (IPv4 compatibility space)

10.48.0.0.192.168.1.100 (example new global address space)Practical challenges do exist

Of course, this isn’t a perfect design.

- 64-bit might not be sufficient for future massive-scale IoT or AI agent networks (IPv6’s 128-bit addresses this)

- Router address processing logic would need changing — a heavy change for 1990s hardware

- Security features (authentication, encryption) are not solved by address extension alone

Even so, the idea of “just increase the octet count without changing the notation” seems like it could have been a realistic choice, given the migration costs of IPv6 that failed to spread for 30 years.

IPv6’s designers surely understood this dilemma, and they had reasons to choose the clean break anyway. But the result is that half the world still runs on IPv4. That fact weighs something.

Personal take: NAT’s bottleneck is also a copper wire problem

There is one more perspective I want to add.

NAT’s bottleneck is not just the processing cost of address translation. The fundamental issue is that today’s networking equipment, every time it receives a signal carried over optical fiber, converts it to an electrical signal before processing. Electrical signals generate heat, suffer interference, and degrade. NAT translation also happens on these electrical circuits, so the limits become apparent as session counts scale.

NTT’s IOWN (Innovative Optical and Wireless Network) initiative, and its core component the APN (All-Photonics Network), aims to rethink this structure from the ground up.

The conventional architecture looks like this:

Optical fiber → [E/O conversion] → Router (electrical processing) → [O/E conversion] → Optical fiberWhat APN aims for is this:

Optical fiber → Router (optical processing) → Optical fiberAn end-to-end “optical wavelength path” is established, transferring and controlling packets without electrical conversion. IOWN extends photonic technology beyond just the network layer to devices and semiconductor levels as well. NTT targets a 1/100 reduction in power consumption, 125× increase in transmission capacity, and 1/200 reduction in end-to-end latency compared to today.

NAT processing costs drop at the root

Remove electrical conversion, and the latency and heat from conversion disappear. The session count wall that CGNAT faces could be significantly relieved. The root cause of “NAT is slow” being in the physical layer rather than the protocol becomes clear again.

IPv4’s lifespan may extend even further

Paradoxically, if APN becomes widespread, the situation of “NAT is still fine” may persist even longer. But removing the processing bottleneck means more devices can be handled by fewer pieces of equipment. With power consumption dropping dramatically, the running-cost structure of infrastructure will change.

A breakthrough on a different axis than address design

The IPv4-or-IPv6, 8-octet-or-128-bit debate is about address space and routing logic. IOWN/APN brings a breakthrough on a different axis — physical transfer speed, power consumption, and latency.

Protocol design and physical infrastructure evolution proceed on separate tracks. If APN creates a “foundation that handles any protocol fast and with low latency,” then IPv4, IPv6, or any new address scheme can run on top — more options, more flexibility.

NTT is targeting practical use in the 2030s, and it remains at the research and demonstration stage today. But the concept of “processing signals arriving over optical fiber without converting back to electricity — all-optical” clearly holds the potential to fundamentally transform the physical foundation of the internet. It is worth watching alongside the debate on address design.